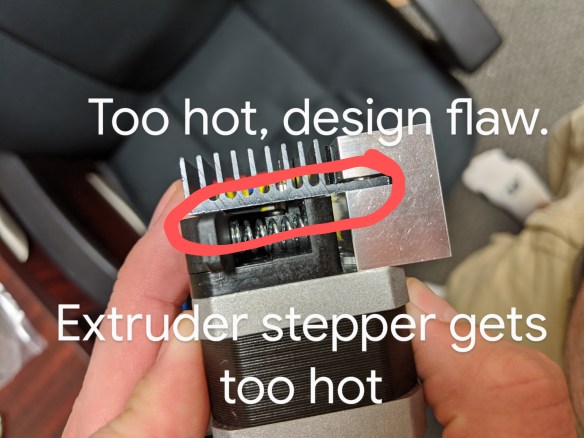

The extruder & hot end on the stock X-Max is a critically flawed design.Several people, contributed to a solution that allows alternate extruders including an E3D V6, a Slice Engineering Copperhead or Mosquito, a Trianglelab Dragon, and probably many others. The extruder and hot end change fixes the overwhelmingly majority of the problems with the QIDI X-Max 3D printer.

This list is for improving this design https://www.thingiverse.com/thing:3994628 in this group https://www.facebook.com/groups/1811744122380955 . The design work is led by Chad Wills, whose work has provided an amazing improvement to this deeply flawed printer.

List of issues in design as printed on 7/10/2020 from a QIDI X-Max purchased earlier this year (some revisions compared to older models apparently).

- Bearing Clamp Broke

- Bearing cage loose on bearings. The lower duct could help reinforce the clamping with some minor changes or perhaps a simpler option are zip tie slots to allow tightening the carriage to the bearings despite X-Max revision and print size variations.

- Positive Retention Belt Clip. The Belt clip was bowing and offered only a loose compression fit.

- The top of the BMG Clone Extruder this the metal frame near the end of the Y travel at the front of the machine. This needs another ~3mm drop.

- NOTE: It was actually the screw on the BMG Clone Extruder that was draging first.

The cable towers and board mount drag slightly on the frame near the end of the X travel on the left side of the machine. The towers need to be dropped by ~2mm (I need to validate measurement). The board needs to drop by ~2mm as the clip on the ribbon cable is the highest point.

- I had to drill out my BMG extruder door holes. A teardrop cut at the top of these might help prevent failure but this is a nit-picking, minor issue.

- It’s difficult to reach the lower left board screw with the extruder in place.

- Heater Cartridge & Thermocouple board routing cutout is too small, it should be widened.

- I’d like to keep the foam rubber on the ribbon cable and as-is the connector is pushed away from the towers by a lack of clearance. A 1-2mm move should allow the tower to clear.

- Rear 5015 Part Blower Fan impacts Y Axis stepper when homing. The QIDI isn’t very smart and if it can’t reach the Y limit switch it will just keep hammering away, trying to self destruct the printer. I shimmed the Y carriage to hit the limit switch earlier with tape and a 3mm shim. This was enough, so a ~3mm shift will allow this to clear.

- It would be nice to find a way to get a second screw on the rear 5015 Part blower fan. If it bumps out of place, the airflow isn’t directed to the part.